Next: Belief versus frequency Up: The rules of probability Previous: Basic rules

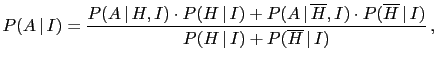

Formally, Eq. (33) follows from Eq. (32)

and basic rule 4. Its interpretation is that the probability of

any hypothesis can be seen as `weighted average' of conditional

probabilities, with weights given by the probabilities of the

conditionands [remember that

![]() and therefore Eq. (33)

can be rewritten as

and therefore Eq. (33)

can be rewritten as

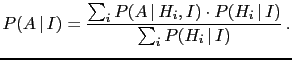

Eq. (34) and (35) are simple extensions

of Eq. (32) and (33) to a generic `complete class',

defined as a set of mutually exclusive hypotheses

[

![]() , i.e.

, i.e.

![]() ],

of which at least one must be true [

],

of which at least one must be true [

![]() ,

i.e.

,

i.e.

![]() ]. It follows then that Eq. (35)

can be rewritten as the (`more explicit') weighted average

]. It follows then that Eq. (35)

can be rewritten as the (`more explicit') weighted average